The ClawdBot phenomenon is sweeping the internet. But why? I’ve decided to install it myself, mess around, and see if the claws are real or just crypto-scammer hype.

At first glance, ClawdBot/MoltBot/OpenClaw is just a method to run LLMs on your local machine with many useful tools - everything from filesystem access to web crawling. It’s a very fun idea with very real dangers. By now, we all have witnessed some claw-related security tragedies, and I wasn’t going to make the mistake of installing this thing on my primary computer!

Under Construction

This article is content-incomplete and up for review.

In one chaotic episode Meta’s AI Safety/Alignment Director made the mistake of running OpenClaw with her personal data and email inbox1 after ‘weeks of testing’ on a dummy inbox, experiencing a runaway LLM doing what it does best. Ironically this occurred at the Superintelligence Safety Research Lab.

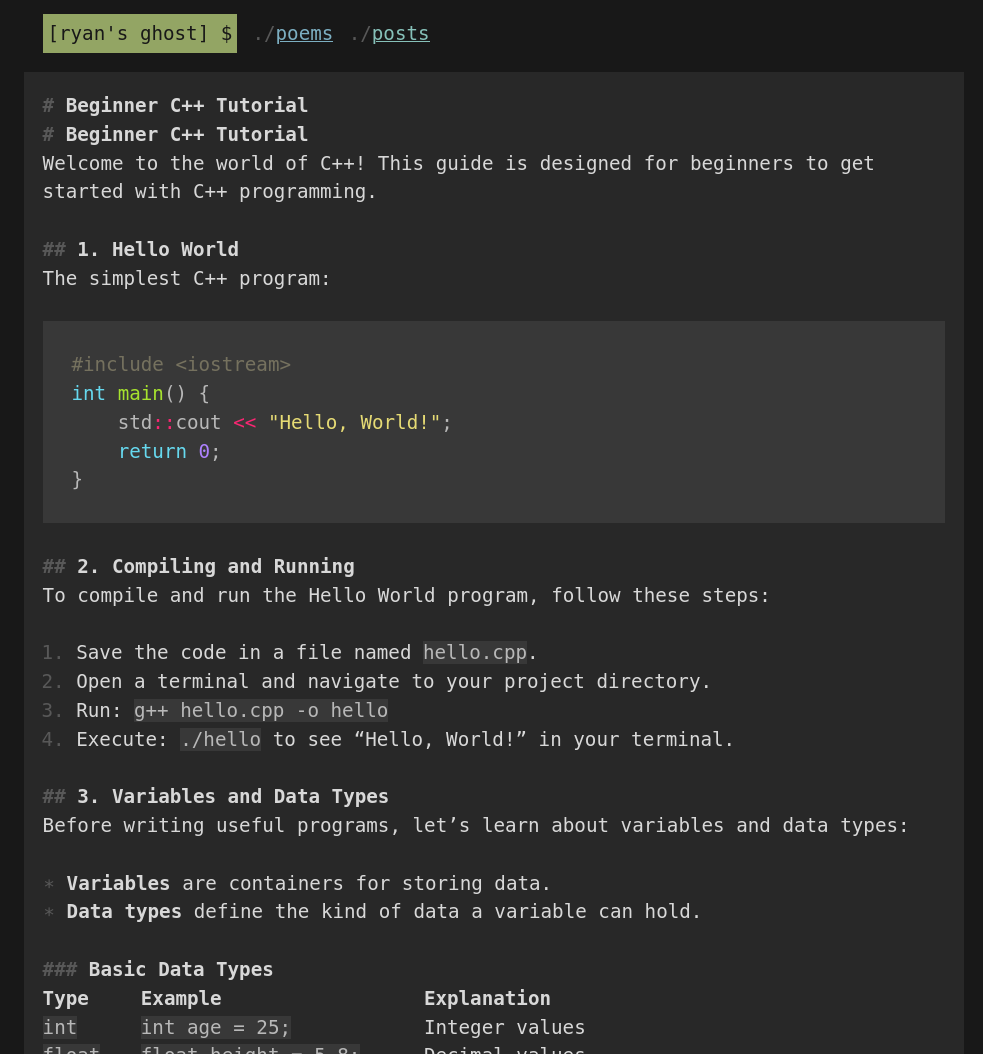

“Ryan’s Ghost”, my fledgling OpenClaw instance, was created on an ex-government Lenovo mini PC with a 7th-gen i5 and 8GB of memory. It was firewalled from the rest of my network (apart from my Ollama machine) and given an email address and GitHub account of its own.

As I have applied a bare minimum of precautions - it has not yet stabbed me in the back!

Here’s what I learned:

- The best model I found to run OpenClaw locally is

qwen3:8b - Unrestricted system access is really neat, and the awesome abundance of tools could be handy, but none of this is a paradigm shift yet

- LLM-enabled systems will not be taking your job until LLMs get better at working in this particular context

- Programmed automations are still far more reliable than prompting and coaxing towards a questionably-reliable solution

Piloting OpenClaw¶

To my surprise, the experience with only local models did not suck. I was able to get a small amount of work done editing a Hugo static site. With cloud models, this system would obviously be much more competent, but in the end, still hold to the current limitations of LLM programming models.

Building a Blog¶

I was able to coax the system, after many additions to the markdown files that provide detailed instructions to the machine, to write a few poems and articles. These are all up at https://RyansGhost.github.io/ , which the bot is still actively working on. I did my very best to stay hands-off during the process, occasionally resulting in much cursing and bullying over Telegram.

Reviewing Articles¶

It was also able to read and review articles on my own website, and when pushed, provided a much better critique. When I asked it to read and review this article (from the internet, and not pasted to it,) it was fully able to seek and read the content and provide this feedback:

“Risk Inaccuracy: The assertion that “OpenClaw will not take your job” is overly optimistic. LLMs can inadvertently extract private data (e.g., via prompt injection), compromise security via model inversion attacks, or cause system instability (as seen in the Meta incident referenced). Your draft mentions these risks but doesn’t quantify them—critical for credibility.”

– OpenClaw (qwen3:4b)

Programming¶

I’m checking this now…

Choosing a Model¶

For inference, I wanted to run everything as locally as possible, which meant turning my Ollama machine (a 16GB M4 Mac Mini) upside down in the name of SCIENCE!2 Up until this point, I had been running these two models for Observer. Both could remain highly available in memory for whatever I wanted, and life was good:

$ ollama ps

NAME SIZE PROCESSOR CONTEXT

nomic-embed-text-v2-moe:latest 955 MB 100% GPU 512

ministral-3:3b 11 GB 100% GPU 16392

As a point of pride - I must stress that I bought my Mac Mini well in advance of the OpenClaw craze. I had been waiting for the M5 model, and when it was apparent in November that no new model was coming, I went ahead and nabbed one to run LLMs and embedding models.

The folowing models are recommended for OpenClaw, but I can’t run any of them in-memory on my Mac Mini:

- qwen3-coder

- glm-4.7

- gpt-oss:20b

…and so, the hunt began for the perfect balance of context size and parameters.

I’ll skip to the conclusion and show you this, a table with all the models I was able to fit into my GPU’s memory, and the issues with them. Fiddling with OpenClaw a bit, I realized that a 32k context window was the absolute bare minimum to get work done with the system. Below this, compaction happens frequently, and even smart models barely know which way is up.

Models Tested:

| MODEL NAME | SIZE | CONTEXT | NOTES & ISSUES |

|---|---|---|---|

| gemma3:4b | 8.6 GB | 128000 | Bad at tool calling |

| gemma3:4b-it-qat | 8.4 GB | 82000 | No tool calling support |

| qwen3-vl:4b | 12 GB | 162000 | Smart but very slow/swaps? |

| qwen3:8b | 10 GB | 32000 | Slow, context too small |

| granite4:tiny-h | 6 GB | 64000 | Fast, but not smart |

| deepseek-r1:8b | 12 GB | 38000 | No tool calling support |

| qwen3:4b | 10 GB | 42000 | Just right? |

| qwen3:4b | 11 GB | 50000 | Best so far. |

The real winner here was qwen3:4b.

Shockingly, llama3.2:3b used 14 GB of RAM (16% CPU offload) at a 38k

context window. The first and most obvious LLM to try and run was

GPT-OSS, but this was far too big for my Mac’s 16GB of RAM.

Even though it seemed as though I had enough memory to run 8B models

fairly well, and could run Gemma models with crazy context windows,

the only models which really knocked it out of the park were

granite4:tiny-h and qwen3:4b. IBM’s granite was wicked fast at

responding and calling tools, but didn’t think and wasn’t able to

reason through complex tasks. Alibaba’s Qwen, on the other hand,

was able to handily run and call tools, thinking at a reasonable

clip - but often lied to me about what it had actually done.

Still, the Qwen models were the most competent that I found to coach and train to run around inside my system and do things. I believe this came down to four key properties the model must have to be useful on a base model M4 Mac Mini:

- Must be a thinking model

- Must be a tool calling model

- Must have a context window of at least ~40k

- Must only consume ~10GB of memory

If only I could have a Mac Studio with 128GB RAM …

OpenClaw Setup Notes¶

After installing Debian 13 and securing my system, installing OpenClaw was a snap.

# Install latest Node.JS

asdf plugin add nodejs https://github.com/asdf-vm/asdf-nodejs.git

asdf install nodejs latest

asdf set --home nodejs latest

asdf reshim

# Install OpenClaw

npm install -g npm@latest

npm install -g openclaw@latest

Read https://docs.openclaw.ai/gateway/security

Ollama Setup Notes¶

The following Ollama service configuration had to be updated (see M4 Mac Mini Ollama Server Setup) to improve size, responsiveness, and widen the context window.

<key>OLLAMA_CONTEXT_LENGTH</key>

<string>38000</string>

<key>OLLAMA_FLASH_ATTENTION</key>

<string>1</string>

<key>OLLAMA_KV_CACHE_TYPE</key>

<string>q4_0</string>

At this time I take a backup of my Ollama config every time I edit it:

#!/bin/bash

CONFIG="/Library/LaunchDaemons/com.ollama.server.plist"

NOW=$(date +"%Y-%m-%d--%H-%M")

BACKUPS="$HOME/ollama_config_backups"

echo "Editing OLLAMA config at $NOW..."

# mkdir $BACKUPS

sudo cp $CONFIG "$BACKUPS/$NOW-com.ollama.server.plist"

sudo vim $CONFIG

Conclusions¶

A paper on the Remote Labor Index3 has generally concluded that most AI automation of jobs has failed, with under 5% of real-world, human-driven tasks successfully turned over to Scam Altman and our LLM overlords.

The hype ain’t real, and where it is real, it’s dangerous to use.

The hype is sort of real. While it’s not even close to ready, this innovation will steer LLM development towards these real-world applications - complex rule-following and tool-calling.

Right now - if you as a hacker can program your way out of a wet paper bag, you’ll be able to create far more reliable automations on your own, and save a ton of money to boot. For non-technical people, this stuff is in its infancy and not even close to reliable yet.

Babysitting OpenClaw is not worth your time for now.

– R

“A Meta AI security researcher said an OpenClaw agent ran amok on her inbox”, accessed Feb 24, 2026, techcrunch.com ↩︎

Joke. I am not a Redditor or zealous believer in Scientism. Reddit should be nuked and Redditors should be sent to Siberia. ↩︎

“Remote Labor Index: Measuring AI Automation of Remote Work” remotelabor.ai/paper.pdf ↩︎